The Steve Hoberman Core Collection

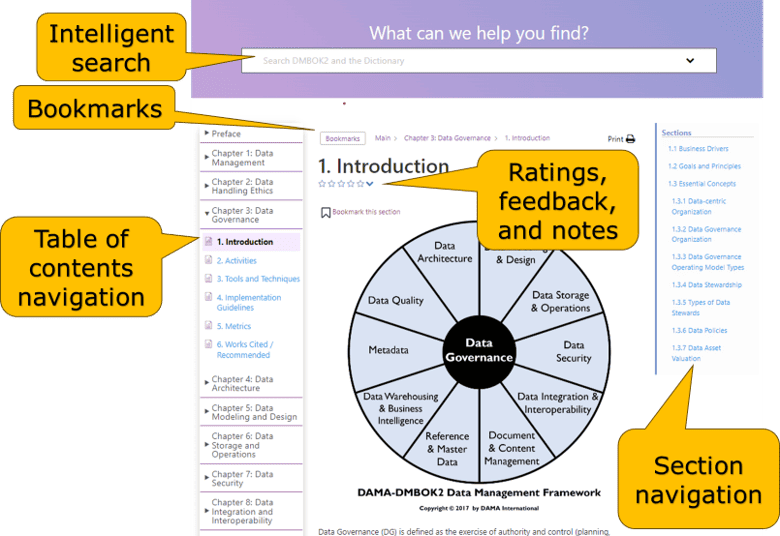

PebbleU is our interactive platform where you can take courses and read our books on any device, as well as annotate, add bookmarks, and perform intelligence searches. A collection is a group of one or more books and courses that you can annually subscribe to on PebbleU. If a collection contains books, you will also receive PDF Instant Downloads of those books. New features weekly!

The Master Class is a complete data modeling course containing three modules. After completing Module 1, you will be able to explain the benefits of data modeling, apply the five settings to build a data model masterpiece, and know how and when to use each data modeling component (entities, attributes, representatives, relationships, subtyping, keys, hierarchies, and networks). After completing Module 2, you will be able to create conceptual, logical, and physical relational and dimensional data models. You will also learn how NoSQL data models differ from traditional in terms of structure and modeling approach. After completing Module 3, you will be able to apply data model best practices through the ten categories of the Data Model Scorecard®. You will know not just how to build a data model, but how to build a data model well. Through the Scorecard, you will be able to incorporate supportability and extensibility features into your data model, as well as assess the quality of any data model.

Case studies and many exercises reinforce the material and will enable you to apply these techniques in your current projects. This course assumes no prior data modeling knowledge and, therefore, there are no prerequisites.

You can learn more about the class and the upcoming schedule here.

This book is written in a conversational style that encourages you to read it from start to finish and master these ten objectives:

1. Know when a data model is needed and which type of data model is most effective for each situation

2. Read a data model of any size and complexity with the same confidence as reading a book

3. Build a fully normalized relational data model, as well as an easily navigatable dimensional model

4. Apply techniques to turn a logical data model into an efficient physical design

5. Leverage several templates to make requirements gathering more efficient and accurate

6. Explain all ten categories of the Data Model Scorecard

7. Learn strategies to improve your working relationships with others

8. Appreciate the impact unstructured data has, and will have, on our data modeling deliverables

9. Learn basic UML concepts

10. Put data modeling in context with XML, metadata, and agile development

The Data Model Scorecard is a data model quality scoring tool containing ten categories aimed at improving the quality of your organization’s data models. Many of my consulting assignments are dedicated to applying the Data Model Scorecard to my client’s data models – I will show you how to apply the Scorecard in this book.

This book, written for people who build, use, or review data models, contains the Data Model Scorecard template and an explanation along with many examples of each of the ten Scorecard categories. There are three sections:

In Section I, Data Modeling and the Need for Validation, receive a short data modeling primer in Chapter 1, understand why it is important to get the data model right in Chapter 2, and learn about the Data Model Scorecard in Chapter 3.

In Section II, Data Model Scorecard Categories, we will explain each of the ten categories of the Data Model Scorecard. There are ten chapters in this section, each chapter dedicated to a specific Scorecard category:

In Section III, Validating Data Models, we will prepare for the model review (Chapter 14), cover tips to help during the model review (Chapter 15), and then review a data model based upon an actual project (Chapter 16).

Similar to how the Rosetta Stone provided a communication tool across multiple languages, the Rosedata Stone provides a communication tool across business languages. The Rosedata Stone, called the Business Terms Model (BTM) or the Conceptual Data Model, displays the achievement of a Common Business Language of terms for a particular business initiative.

With more and more data being created and used, combined with intense competition, strict regulations, and rapid-spread social media, the financial, liability, and credibility stakes have never been higher and therefore the need for a Common Business Language has never been greater. Appreciate the power of the BTM and apply the steps to build a BTM over the book’s five chapters:

This book contains three parts, Explanation, Usage, and Impact.

Once you understand blockchain concepts and principles, you can position yourself, department, and organization to leverage distributed ledger technology.

Steve Hoberman has been a data modeler for over 30 years, and thousands of business and data professionals have completed his Data Modeling Master Class. Steve is the author of nine books on data modeling, including The Rosedata Stone and Data Modeling Made Simple. Steve is also the author of Blockchainopoly. One of Steve’s frequent data modeling consulting assignments is to review data models using his Data Model Scorecard® technique. He is the founder of the Design Challenges group, creator of the Data Modeling Institute’s Data Modeling Certification exam, Conference Chair of the Data Modeling Zone conferences, director of Technics Publications, lecturer at Columbia University, and recipient of the Data Administration Management Association (DAMA) International Professional Achievement Award.

Please complete all fields.